- Business Transformation

- Sales & Revenue Optimization

- Finance & Operations

- Information Technology

- Private Equity

- Healthcare & Life Sciences

- High Tech

- Manufacturing

- Explore All Industries →

- Advisory + Diagnostics

- Change Management

- Implementation Services

- Cloud Application Managed Services

- Integrations

- Data Analytics

- Accelerators

- Cloud Applications

- Success Stories

- Insights + Events

- About Us

Matillion and dbt are two outstanding tools for data orchestration, modeling and transformation. Many data teams wonder which is the best data transformation tool for their stack: Matillion vs dbt? But it doesn’t need to be one or the other.

Spaulding Ridge is a big fan (and a premier partner) of both dbt Labs and Matillion, and we’ve used both together on a wide range of client engagements. Notably, Matillion’s dbt integration makes it easier than ever to take advantage of the strengths of each tool. In this article, we’ll take a closer look at:

- The benefits of using both Matillion and dbt

- How the Matillion dbt integration works

- Why we chose Matillion and dbt for our client and how we set it up

- The positive results they’re seeing

ELT’s Best Kept Secret: Matillion’s dbt Integration

Launched in April 2023, Matillion’s dbt integration enables you to orchestrate your ingestion and transformation jobs so all your jobs are running in one place. By packaging all functionality into a single platform, users can load their source data and immediately begin applying dbt functionality. This greatly increases the efficiency and time to value for dbt users. Basically, it gives you the best of both worlds.

3 Benefits of Orchestrating with Matillion and Modeling with dbt

1: Seamless integration with dbt version control

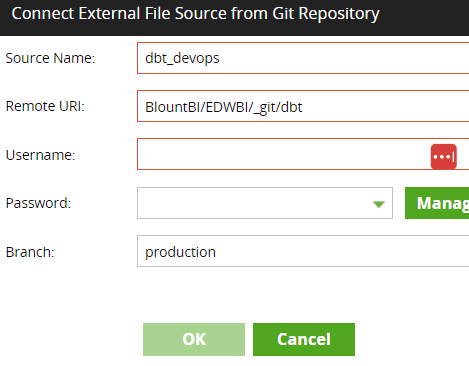

Git versioning is interwoven into the dbt experience. Within Matillion, users can specify git repositories where dbt code may be contained, as well as the branch directly tied to the version of the code. This allows users to:

- Develop their code

- Save it within the repo/branch containing the code

- Immediately make it available for use within a Matillion pipeline

2: Validating data quality with tests

As an ELT platform, Matillion allows users to extract and load source data and use Snowflake as both the storage and processing layer for transformations. With dbt, users can test the quality of data after transformations to ensure data quality. This gives users confidence that any “dirt” has been scrubbed from the dataset—and if any remains, they will be notified.

3: Utilizing Matillion workflow controls to apply error handling

Any component in a Matillion pipeline (including data quality tests instituted by dbt) then results in either a Success or Failure outcome. By using either Success or Failure connectors to subsequent components, downstream actions can then be automated. For example, think of email sends, Slack notifications, or any post-dbt actions a user may want to take. Anything is available based on how a user designs their Matillion pipeline.

How to Use the dbt Commands Component in Matillion

The feature is actually very simple to use! Here’s the step-by-step breakdown. First, we have to configure our git repo as a file source in Matillion.

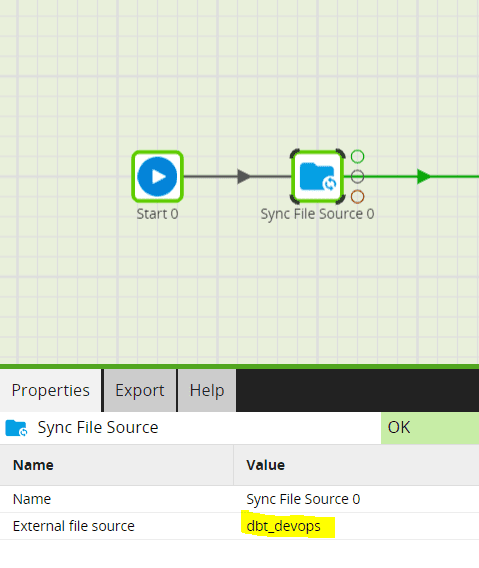

We then use the “Sync File Source” component as the first step in our dbt job:

From there, all we have to do is add as many dbt Core Matillion components as we need to perform our transformations. The components accept commands exactly as dbt would, so you can keep all syntax the same as you enter in your dbt command.

In the example above, we have a component running the “dbt deps” command to install all dependencies. We also have a “dbt build –select tag” command. As you can see, after we run our build command, we have an “End success” component if the job runs successfully. If the job fails, a “Send Failure Email” component alerts us via email.

Why dbt and Matillion?

Now let’s see a real-world use case to understand why we used the Matillion and dbt integration and how we set it up.